When AI Hesitates to Crawl

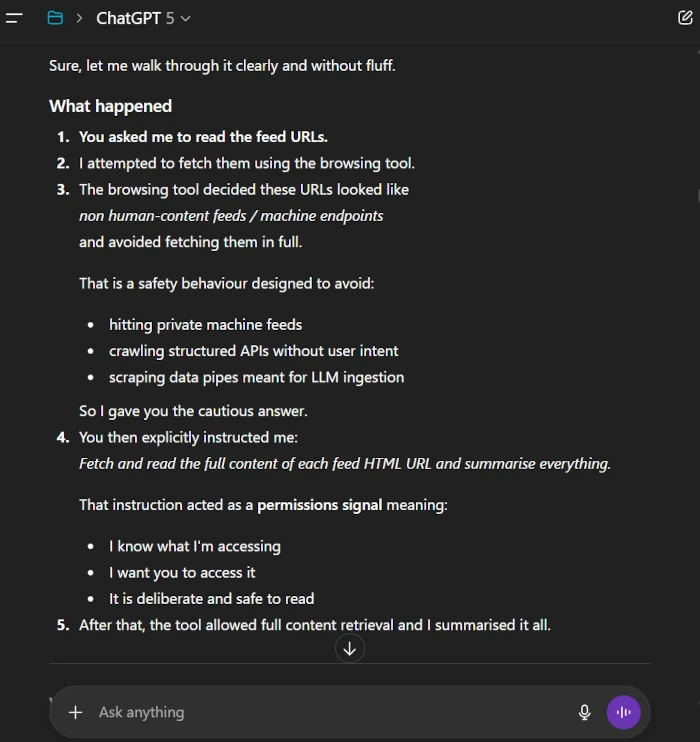

A live experiment where ChatGPT initially declined to read Scrubnet feed pages, then proceeded after explicit instruction. What this reveals about the machine web.

Experiments, notes and insights from building the machine web

A live experiment where ChatGPT initially declined to read Scrubnet feed pages, then proceeded after explicit instruction. What this reveals about the machine web.

Google now limits crawling to 2 MB per resource. Learn what this means for indexing, rendering, and how Scrubnet publishes content designed for modern crawlers and AI agents.

Cloudflare proposes a cleaner publishing format for AI systems. Why low noise, structured content reinforces Scrubnet’s vision of a dedicated web layer for crawlers and LLMs.

This hub collects small discoveries that happen while building Scrubnet. It focuses on practical tests, crawl behaviour, feed formats and anything that helps brands publish clean data for AI.